From7:00 PM

To7:40 PM

GMT

Tags:

Stage 1

Presentation

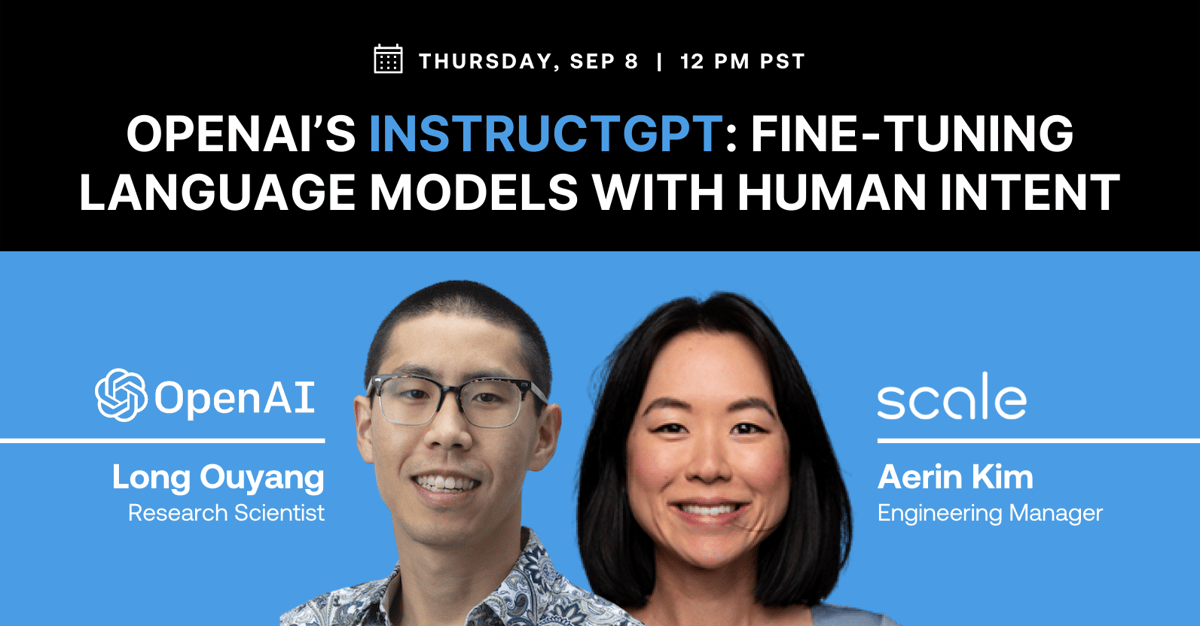

Streamed presentation: InstructGPT

Speakers:

Making language models bigger does not inherently make them better at following a user's intent. For example, large language models can generate outputs that are untruthful, toxic, or simply not helpful to the user. In other words, these models are not aligned with their users. In this presentation, Long Ouyang, Research Scientist at OpenAI, and Aerin Kim, Engineering Manager at Scale AI will explore an avenue for aligning language models with user intent on a wide range of tasks by fine-tuning with human feedback.

Join Long, OpenAI Research Scientist, and Aerin, Scale AI Engineering Manager, for a technical presentation followed by a discussion around Long's work with InstructGPT, and audience Q&A.